If you’re still running Meta ads and optimizing based on last-click attribution, you’re making decisions on data that’s wrong. Not slightly off — materially, consequentially wrong. And the brands that figure this out faster are quietly eating your lunch.

I’ve worked with dozens of DTC brands in the $1M–$50M revenue range, and the single most common measurement mistake I see is treating Meta’s native reporting as ground truth. It isn’t. It never was. But in 2024 and beyond — with iOS privacy changes baked in, click IDs degrading, and competition for attention at an all-time high — clinging to last-click is like navigating by a map from 2018.

Here’s how to build a measurement framework that actually tells you what Meta is doing for your business.

Why Last-Click Attribution Fails DTC Brands

Last-click attribution does one thing: it gives 100% of the credit for a conversion to the final click before purchase. It sounds logical until you think about how people actually buy.

According to Google’s “See, Think, Do, Care” research, most purchase decisions involve multiple touchpoints across multiple sessions over multiple days — sometimes weeks. A customer might see your Meta video ad three times, click a Google Shopping result, read a Reddit thread about your brand, and then buy via a direct visit. Last-click hands all the credit to direct. Meta gets zero. Your ROAS tank. You cut budget. Sales drop. You scramble to figure out why.

The Meta Business Help Center itself acknowledges that the default 7-day click / 1-day view attribution window captures purchases that would have happened anyway — it can’t separate incremental lift from coincidence. A 2022 analysis by Measured, a media measurement firm, found that brands using last-click attribution systematically undervalued top-of-funnel channels by 30–50% while overvaluing bottom-of-funnel ones. That skew compounds over time into a spending strategy that kills awareness and starves growth.

So what do you do instead?

The Three-Layer Measurement Stack

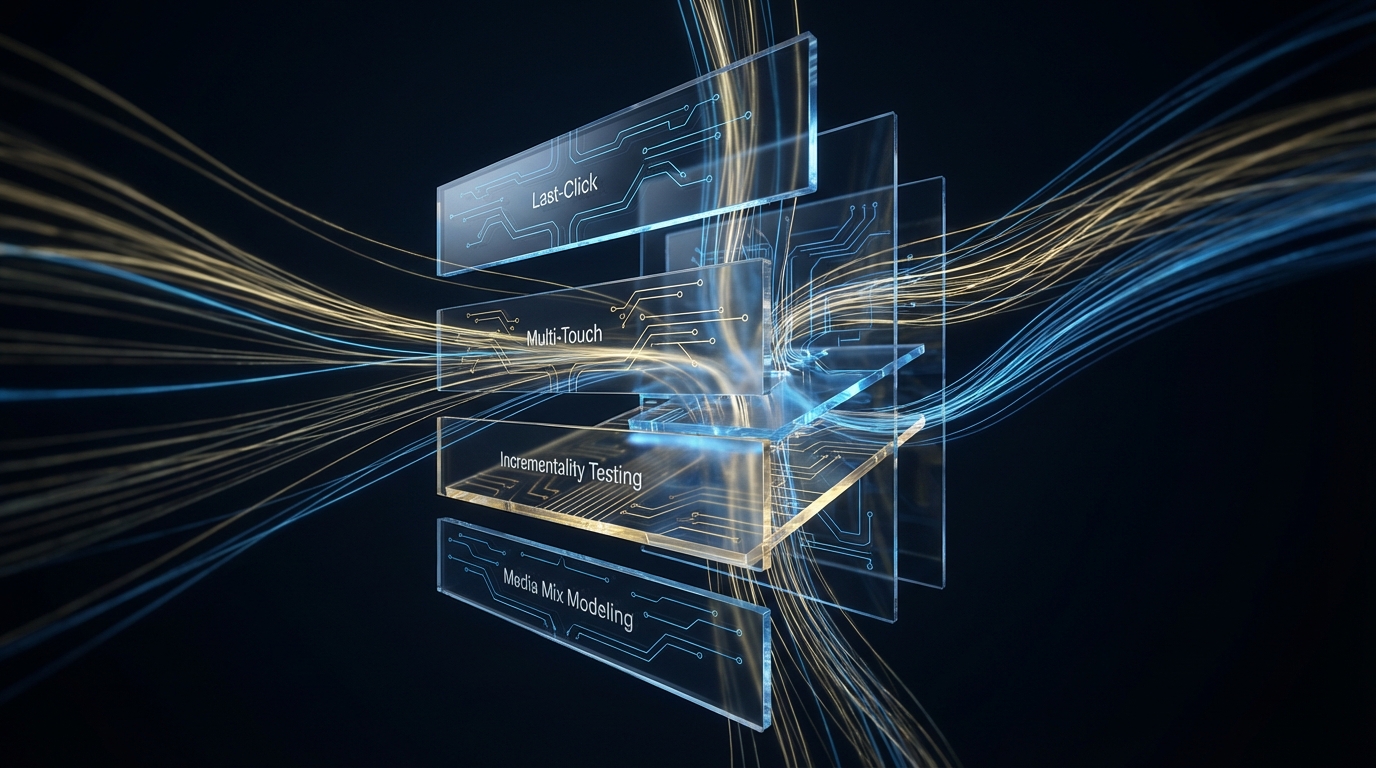

The brands I’ve seen win this game aren’t using any single measurement method. They’re running three layers simultaneously and triangulating.

Layer 1: Marketing Mix Modeling (MMM)

MMM is a statistical approach that uses historical data — spend, revenue, external factors like seasonality — to estimate the contribution of each channel without relying on user-level tracking. It’s privacy-safe, doesn’t depend on cookies or click IDs, and gives you a macro view of channel efficiency.

Meta itself now offers Robyn, an open-source MMM tool built on R, released under Meta’s Open Source program. It’s free, it’s surprisingly good for a brand doing $5M+ in revenue, and there’s no excuse not to use it.

What MMM tells you: how much incremental revenue each channel drives at the macro level, and what the diminishing returns curve looks like for Meta spend specifically.

What it doesn’t tell you: what’s working at the campaign or creative level, or how to optimize week to week.

Layer 2: Incrementality Testing (Geo-Holdout or Ghost Bids)

Incrementality testing answers the most important question in paid media: would this sale have happened without the ad? Last-click can’t answer this. MMM estimates it directionally. Only a controlled experiment actually measures it.

There are two practical approaches for brands in the $1M–$50M range:

- Geo-holdout tests: Turn off or reduce Meta spend in a matched set of geographic markets while keeping it on in others, then compare revenue lift. This is the gold standard. Platforms like Measured or Northbeam can operationalize this, or you can DIY it with Google’s Causal Impact open-source package.

- Meta’s Conversion Lift tool: Meta holds out a percentage of your audience from seeing ads and tracks conversion rates for both groups. It’s not perfect — it’s self-reported, and Meta has a conflict of interest — but it’s free and gives you directional data on true incrementality within the platform.

A Nielsen study commissioned by Meta found that brands running Meta Conversion Lift tests discovered their actual incremental ROAS was meaningfully different from their reported ROAS — sometimes higher, sometimes dramatically lower. The only way to know which is your situation is to test.

Run a geo-holdout or conversion lift test at least once per quarter. It doesn’t have to be fancy. Even a rough 20% holdout run for two weeks gives you signal you can act on.

Layer 3: Multi-Touch Attribution (MTA) with Triangulation

MTA tools — Northbeam, Triple Whale, Rockerbox — attempt to assign partial credit to each touchpoint in a customer journey. They’re better than last-click, but they’re not perfect either: iOS 14+ restrictions mean a meaningful percentage of customer journeys are invisible to them.

According to Lotame’s 2023 State of Data Connectivity Report, identity resolution rates have dropped 40–60% since iOS 14.5 launched in 2021. That means MTA tools are working with incomplete maps.

So don’t treat MTA as gospel — treat it as one signal among three. Use it for directional creative and campaign-level decisions. Use MMM for channel-level budget allocation. Use incrementality tests to gut-check both.

Building Your Meta-Specific Measurement Protocol

Here’s the system I’d install if I were running measurement for a $5M DTC brand starting from scratch.

Step 1: Fix Your Foundation (Week 1)

- Install the Meta Pixel and the Conversions API (CAPI). CAPI sends server-side event data directly to Meta, bypassing browser-level tracking limitations. Meta’s own data shows that CAPI can recover 10–20% of events lost to iOS changes. This is non-negotiable in 2024.

- Set up UTM parameters consistently across every Meta campaign. This sounds boring because it is boring, but without clean UTMs you can’t cross-reference Meta data with GA4 or Shopify reports.

- Pull 24 months of weekly spend and revenue data by channel into a spreadsheet. You’ll need this for MMM.

Step 2: Run a Baseline Incrementality Test (Weeks 2–4)

Before you optimize anything, you need to know what Meta is actually contributing. Pick two or three comparable geographic markets. Pull them out of Meta targeting. Run for two to three weeks. Compare conversion rates and revenue per capita to your control markets.

If your holdout markets perform similarly to your active markets, that’s a red flag — you may be spending on Meta to capture demand that would have converted anyway. If holdout markets show a meaningful dip, you know Meta is genuinely driving incremental purchases.

The Analytic Partners ROI Genome study (which tracked $4B+ in marketing spend) found that brands running regular incrementality tests improved marketing ROI by an average of 15–25% over two years, simply by reallocating away from channels claiming credit for non-incremental conversions.

Step 3: Stand Up a Lightweight MMM (Month 2)

You don’t need a data science team for this. Robyn (Meta’s open-source MMM tool) has documentation and a growing community. If you’re not comfortable running R scripts, agencies like Recast offer MMM-as-a-service for smaller brands.

What you’re looking for from your first MMM run:

- Meta’s estimated contribution to revenue relative to spend

- The point of diminishing returns — at what weekly spend level does incrementally adding budget to Meta stop producing proportional revenue?

- How Meta compares to your other channels on a cost-per-incremental-revenue basis

Run MMM quarterly. It’s not a real-time tool — it needs data to accumulate. But quarterly updates give you enough signal to make meaningful budget allocation decisions without over-indexing on short-term noise.

Step 4: Choose an MTA Tool for Campaign-Level Optimization (Month 2–3)

You still need something to help you make weekly decisions about which campaigns and creatives to scale or kill. MMM and incrementality testing can’t tell you that ad creative A is outperforming ad creative B.

For brands under $10M, Triple Whale is a reasonable starting point — it’s purpose-built for Shopify DTC brands and integrates cleanly. For brands doing $10M–$50M with more complex multi-channel mixes, Northbeam gives more granularity and customizable attribution models.

Key settings to configure in any MTA tool:

- Set your attribution window to match your actual purchase cycle. If customers typically take five to seven days to decide, don’t use a 1-day click window.

- Enable blended ROAS views that combine Meta with other channels — siloed platform reporting creates a false competition between channels that doesn’t reflect how customers actually behave.

- Compare MTA-reported ROAS against your incrementality test results at least quarterly. If they diverge significantly, trust the incrementality test.

The Metrics That Actually Matter

Once your three-layer stack is running, stop obsessing over in-platform ROAS. Here’s what to watch instead:

iROAS (Incremental ROAS)

This is the revenue generated per dollar spent that would not have occurred without the ad. A 3x reported ROAS with a 1.2x iROAS means 80% of your attributed revenue would have happened anyway. You’re paying for credit, not causation.

MER (Marketing Efficiency Ratio)

Total revenue divided by total ad spend across all channels. This is your north star for overall media efficiency. When MER goes up, your media is working. When it drops, something is broken — and you use the other layers to figure out what.

Andrew Foxwell of Foxwell Digital has popularized MER as a primary metric for Meta advertisers, and it’s become standard practice among sophisticated DTC operators. It’s blunt, but it cuts through attribution noise.

New Customer CAC

Break out new customer acquisition cost separately from returning customer revenue in your Meta campaigns. Meta will happily show you great ROAS on retargeting — people who were already going to buy — while your new customer pipeline quietly atrophies. New customer CAC is the metric that predicts future growth. Existing customer ROAS is largely a fiction.

Common Objections (and Why They’re Wrong)

“We don’t have the budget for all these tools.”

Robyn is free. Geo-holdout testing costs you nothing but a few weeks of reduced spend in test markets (which you recover in learning). Google’s Causal Impact package is free. You can build a meaningful measurement foundation for under $500/month in tooling if you’re willing to put in some setup work. The question isn’t whether you can afford the tools — it’s whether you can afford to keep making budget decisions on bad data.

“Our Meta ROAS looks great, why change?”

Because reported ROAS isn’t incremental ROAS. Because Meta has a structural incentive to attribute as many conversions as possible to itself. Because brands I’ve seen with “great” reported ROAS run an incrementality test and discover they’ve been funding Meta’s claim on organic demand. If your framework is working, an incrementality test will confirm it. If it’s not, you’ll know — and you’ll save significant budget.

“This is too complex for our team.”

Start with one thing: run Meta’s native Conversion Lift test on your highest-spend campaign. It takes twenty minutes to set up and Meta manages the holdout automatically. You’ll have your first incrementality data point within two to three weeks. Complexity is a reason to start simple, not a reason to stay blind.

The Competitive Advantage Is Real

Most brands in the $1M–$50M range are not doing this. They’re looking at Meta’s dashboard, seeing a ROAS number, and making budget calls based on it. That’s the default. Which means if you build a proper three-layer measurement framework, you’re operating with materially better information than your competitors.

The Analytic Partners ROI Genome report found that companies investing in marketing measurement outperformed peers by 30% on revenue growth over a five-year horizon. That’s not a marginal edge — that’s the difference between scaling and spinning your wheels.

Attribution is a political problem as much as a technical one. Every channel wants credit. Last-click is the lazy resolution. Incrementality testing is the honest one. Build the stack, run the tests, and make decisions on causation instead of correlation.

That’s how you build a Meta ads measurement framework that actually works.